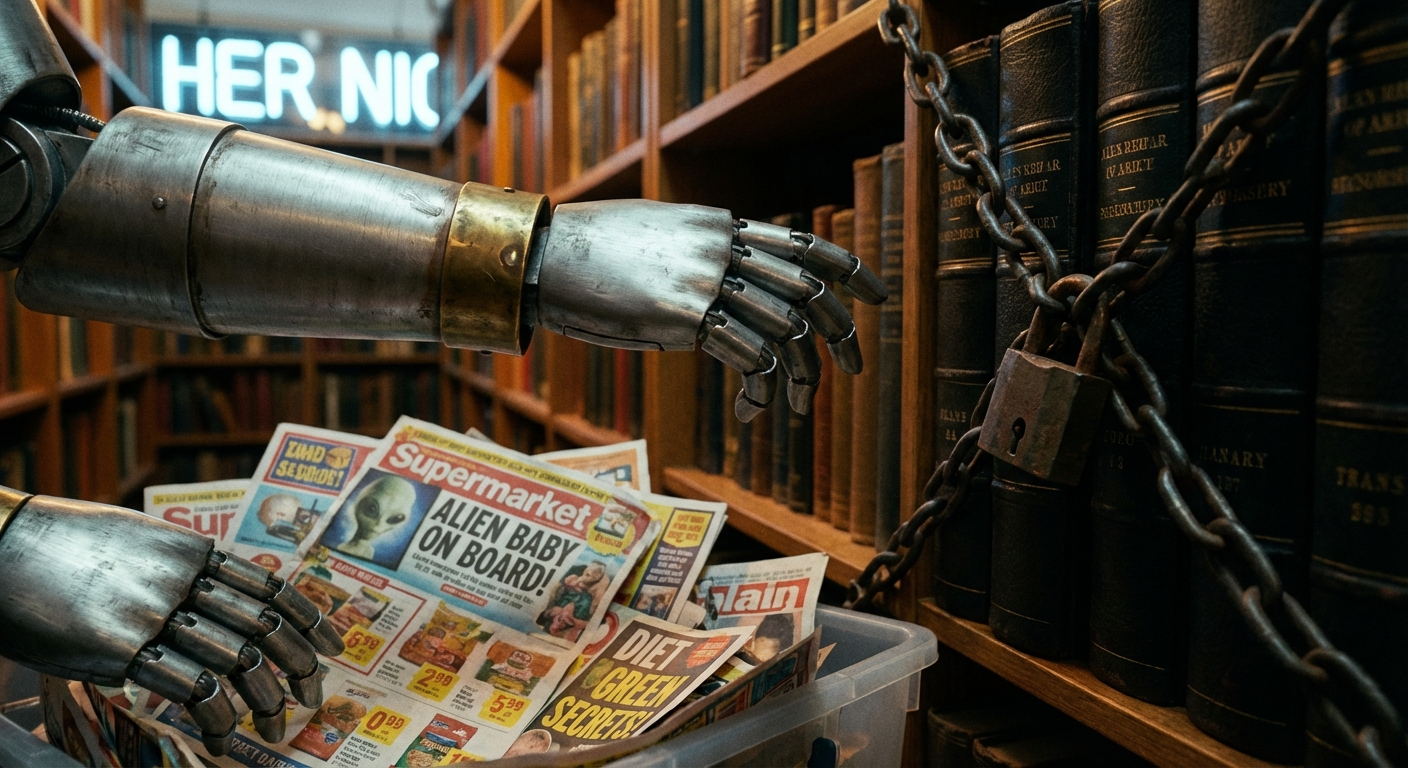

The best websites are blocking AI from reading them, so future bots are going to be trained on the absolute trash left behind.

As top-tier publishers block AI crawlers, the pool of training data is increasingly filled with low-quality content and misinformation. This 'adverse selection' means the more we use AI, the more we might be training it on garbage.

Adverse Selection in the AI Data Commons

SSRN · 6438640

Generative AI depends on high-quality web content, yet no market compensates its producers. We document adverse selection in this AI data commons: facing a binary opt-out choice, the highest-quality producers exit first, degrading the remaining commons. Studying media and news sites at scale, we find a steep quality-blocking gradient: high-factual outlets block at nearly six times the rate of low-factual sources, with misinformation sources remaining most accessible. Publishers strategically tar