Prunes 85% of visual tokens in Vision-Language-Action (VLA) models while retaining 94% accuracy for autonomous driving.

March 30, 2026

Original Paper

ETA-VLA: Efficient Token Adaptation via Temporal Fusion and Intra-LLM Sparsification for Vision-Language-Action Models

arXiv · 2603.25766

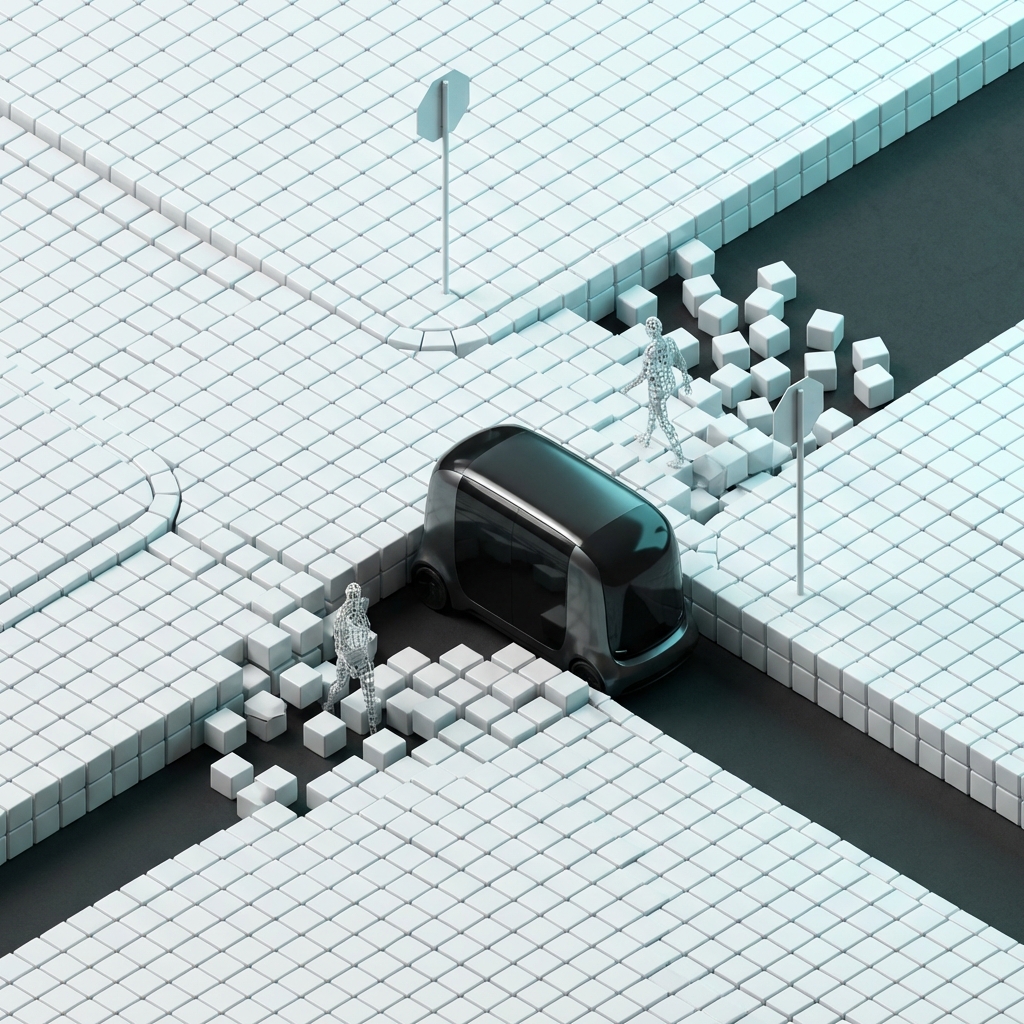

AI-generated illustration

The Takeaway

VLA models are notoriously compute-heavy due to quadratic attention over multi-view video frames; this framework provides a massive efficiency gain (61% FLOP reduction) by dynamically identifying 'important' visual tokens inspired by human driver attention.

From the abstract

The integration of Vision-Language-Action (VLA) models into autonomous driving systems offers a unified framework for interpreting complex scenes and executing control commands. However, the necessity to incorporate historical multi-view frames for accurate temporal reasoning imposes a severe computational burden, primarily driven by the quadratic complexity of self-attention mechanisms in Large Language Models (LLMs). To alleviate this bottleneck, we propose ETA-VLA, an Efficient Token Adaptati